Content Systems: Integrate AI Into Editorial Workflows

Why integrating AI into editorial workflows matters for business owners

Every organization that publishes content faces the same pressure: do more with less, keep messages on-brand, and move faster than competitors. Add the emergence of editorial AI tools and the promise of dramatic productivity gains — plus the risks of inconsistent quality and compliance — and you have a real systems problem, not just a talent problem.

Fact: companies that adopt AI-assisted content workflows report 2–5x faster content production cycles in pilot programs, while top-performing teams retain human review at critical gates to protect brand and accuracy. For Florida realtors, brokers, and growth-focused founders, that multiplier can translate to faster listings, quicker ad creative iterations, and more timely lead nurture.

The current landscape for editorial AI and content systems

Editorial AI tools are no longer novelty features. From language models that draft blog posts, to image generators, to workflow automation hooks for CMS platforms, the tooling stack has matured. This maturity creates opportunity — and complexity.

Key dynamics shaping adoption today:

- Tool proliferation: teams juggle multiple AI models, creative tools, and APIs rather than a single unified product.

- Regulation and brand risk: accuracy, copyright, and compliance checkpoints are non-negotiable for regulated industries and local businesses.

- Automation expectations: stakeholders expect content automation to move beyond first drafts into publishing tasks, metadata enrichment, and A/B testing pipelines.

That means a content system must be deliberately designed — with roles, prompts, QA, governance, and automation patterns — instead of retrofitting tools into chaotic processes.

Framework: Designing content systems for businesses that use AI

CreativeWolf recommends a systems approach that balances three pillars: human creativity, brand controls, and AI tooling. Treat AI as a capability layer in the editorial stack, not the author.

The three-pillar model explained

- Human creativity — Subject matter experts, editors, and designers who provide judgement, nuance, and final approval.

- Brand controls — Tone-of-voice guides, legal constraints, and content standards enforced through checklists and governance rules.

- AI tooling — Models for drafting, summarization, image generation, metadata, and automation that accelerate repetitive work.

Roles and RACI adapted for AI content workflows

Map responsibilities clearly so AI outputs are stewarded at each gate. Typical role set:

- Editor-in-Chief — Owns the editorial calendar and final content sign-off.

- AI Prompt Engineer — Crafts and maintains reusable prompts and templates for consistency.

- Brand Steward — Enforces tone, legal, and regulated content rules.

- Subject Matter Expert (SME) — Validates technical accuracy or local-market facts.

- SEO Lead — Supplies keyword strategy, meta briefs, and on-page guidance.

- Designer/Multimedia — Produces visual assets and accessibility compliance.

- QA Reviewer — Performs final checks and publishes.

How to build an editorial AI workflow that scales

The core of an operational content system is the editorial calendar, with AI integrated at specific repeatable touchpoints. Below is a practical, repeatable workflow pattern that teams can adapt.

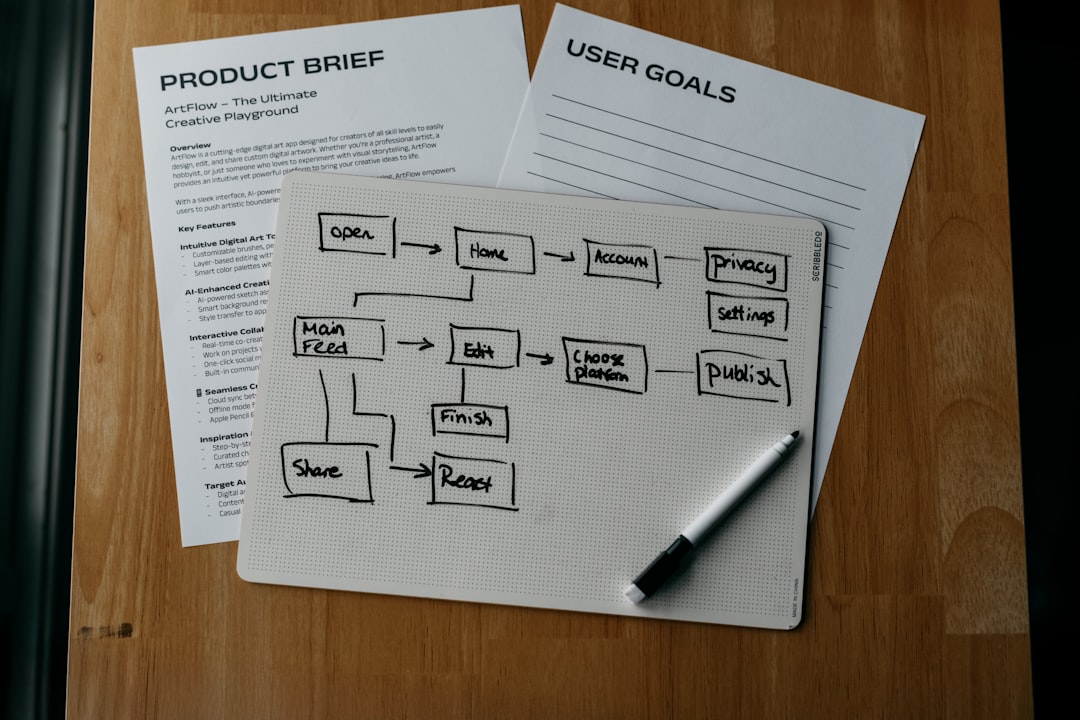

Workflow pattern: Idea → Draft → Review → Publish → Learn

- Idea capture and prioritization — Use an intake form that records goal, audience, SEO keywords, owner, and deadline. Automate assignment to the editorial calendar.

- AI-assisted drafting — Prompt-engineered models produce outlines, first drafts, and social captions. Maintain prompt templates per content type.

- Human review and edits — Editors and SMEs revise for accuracy, narrative, and brand voice.

- QA and compliance gate — Apply the approval checklist (legal, accessibility, claims, citations).

- Publish and automate distribution — Push to CMS, social schedulers, and ad platforms with automated metadata and tracking.

- Measure and iterate — Capture velocity and quality metrics to optimize prompts and templates.

Design workflows so AI accelerates repeatable work and humans own decision points where brand, accuracy, and customer trust matter most.

Practical steps: templates, prompts, and QA checkpoints

Below are concrete artifacts your team can implement this week.

Reusable prompt templates

Store these in a shared prompt library. Encourage version control and change logs.

- Outline prompt (blog): "Create a detailed 7-section outline for an 1,200-word blog on [TOPIC], targeting [AUDIENCE], primary keyword [KEYWORD]. Include meta description, suggested subheadings, and one data-driven stat to cite."

- Local listing rewrite (realtor): "Rewrite the property listing copy in a confident, local voice. Emphasize [FEATURES] and include a call-to-action for scheduling a showing. Keep it <150 words."

- Ad headline generator: "Generate 10 short headlines (under 30 characters) for a Facebook lead ad promoting [SERVICE], using urgency and a local angle like [LOCATION]."

- Image prompt (AI art): "Create a high-res hero image of [PROPERTY TYPE] in [CLIMATE], golden-hour lighting, clean modern composition, for a real-estate landing page."

Approval gate template

Use this checklist as a staging gate before publishing. Automated: fail the publish step if any critical item is unchecked.

- Fact-checks: key claims have citations or SME sign-off.

- Brand voice: copy aligns with tone examples (pass/fail).

- Legal/compliance: disclaimers present where required.

- SEO basics: meta title, meta description, slug, H1, internal link plan present.

- Accessibility: image alt text, headings structure, and contrast checks.

- AI disclosure: where required, include a short disclosure statement about AI assistance.

QA checkpoint checklist (quick pass/fail criteria)

- Accuracy: No factual errors in the lede or data points.

- Tone: Matches one of the approved tone samples.

- Uniqueness: Short plagiarism check accepted; content not duplicated from competitors.

- Performance tags: Target keywords present in header and meta.

- Publish readiness: Images optimized, CTA links live, tracking pixels in place.

Automation patterns that reduce manual work

Automation should handle orchestration and repetitive enrichment tasks — not final content approval. Common patterns we implement:

- Content scaffolding: Automated outline and tag generation when an idea is added to the calendar.

- Metadata enrichment: Auto-fill alt text, meta description suggestions, and canonical tags via the AI model and SEO rules.

- Versioning and rollback: Store AI-generated drafts as separate versions in your CMS with automatic diffs.

- Publishing pipelines: Approvals trigger automated publishing to staging, then to live after QA sign-off.

- Feedback loop: Post-publish performance data (CTR, engagement) fed into a retraining log for prompt improvements.

How to measure success when AI is in the loop

Traditional content metrics matter — but when AI participates, add velocity and quality controls to the measurement mix.

Core metrics to track

- Content velocity: Ideas → Publish time, number of content pieces per month per owner.

- First-draft ratio: Percentage of content that requires <25% human rewrite after AI draft.

- Quality index: Composite score combining editorial QA pass rate, user engagement, and SEO ranking changes.

- Compliance incidents: Number of legal or brand violations found post-publish.

- Reuse rate: Percentage of AI-created assets reused across channels.

Track these over time to spot degradation in AI outputs or improvements after prompt tuning.

Real-world example: a local brokerage streamlines listings

A mid-sized Florida brokerage implemented a content system where agents submit listing intake forms. AI generated listing drafts, social captions, and a hero image prompt. Editors reviewed and SMEs confirmed neighborhood facts. This reduced average time-to-live from 3 days to 12 hours while reducing compliance issues by introducing a mandatory QA gate for investment-properties.

Key wins: higher listing velocity, more consistent brand voice across agents, and measurable uplift in listing page visits from improved meta descriptions.

Risks, governance, and sustainable practices

AI introduces operational risk when teams assume models are correct. Build governance into the workflow:

- Maintain a model inventory: which models are used for which tasks and why.

- Version prompts and archive changes with rationale and performance notes.

- Set escalation rules: if AI suggests a claim, it requires SME verification.

- Train humans: invest in prompt engineering and AI literacy for editors and marketers.

Where content systems and editorial AI are heading

Expect AI to move from assistant to collaborator: models will propose data-backed angles, perform semantic SEO, and create modular content blocks optimized for different channels. Systems that win will be those that treat AI as a flexible engine with strong human governance and measurable KPIs.

Teams that codify prompts, approval gates, and automation patterns now will find themselves uniquely positioned to scale content without losing brand integrity or compliance.

Next practical move for busy business owners

If your team is ready to reduce time-to-publish, preserve brand trust, and harness editorial AI tools safely, start with a short diagnostic: map your current editorial process, list the repetitive tasks, and run a pilot integrating one AI capability with a single approval gate.

Need help? Schedule an AI Marketing Strategy Call with CreativeWolf to audit your editorial flow, design an AI-integrated content system tailored to your business, and get a 90-day rollout plan that includes templates, prompts, and measurement frameworks.

Internal resources you may find useful:

- AI Marketing Services — end-to-end support for integrating AI into marketing systems.

- Editorial AI Audit — a short assessment to map risk and opportunities.

- Prompt Engineering Masterclass — train your team to build and maintain reusable prompts.